Introduction

Machine learning (ML) and other artificial intelligence techniques can benefit from hardware acceleration. Circuits specifically designed for computing machine learning algorithms reduce power consumption and increase computational speed, compared to conventional processors. Drawing from our past experience in nonlinear electronics and collaborations with UMD colleagues, our group develops chip sets inspired by the brain's architecture to perform ultrafast and low-power ML computation.

Current Work

Focus on Recurrent Neural Networks:

Our current work with ML electronics focuses on a specific paradigm called Reservoir Computing, which excels at tasks requiring rapid inference. Its applications include prediction of complex phenomena like chaotic time series, speech recognition, and rapid image recognition/classification.

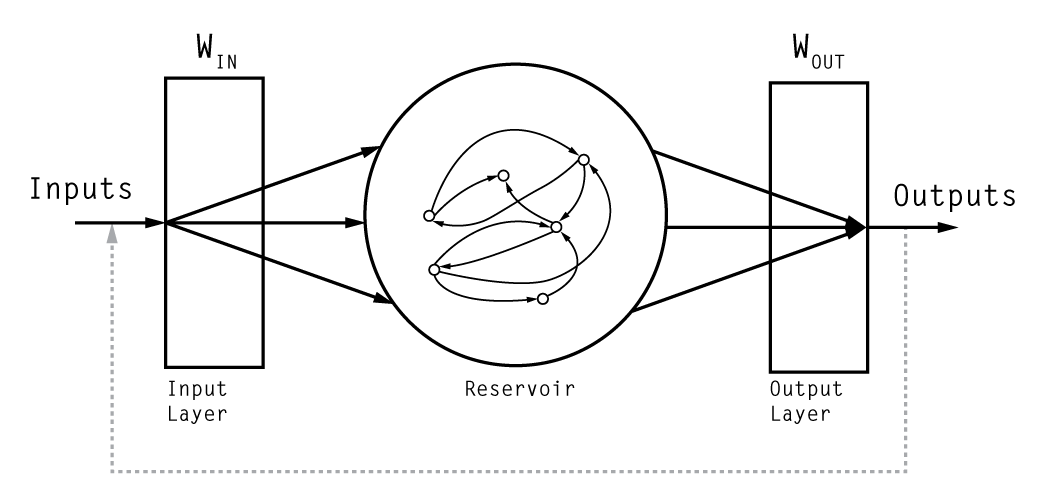

A reservoir computer (RC; see diagram below) is a type of recurrent neural network, which consists of large arrays of communicating artificial neurons, each one performing a simple non-linear operation on its inputs. In a RC, the neuron interconnections are described by a random sparse directed graph. The RC output is typically a linear combination of the output of all the nodes.

Reservoir computers have the pros of other types of neural networks, but they further benefit from faster training times due to the output being only a single trained layer of neurons, with the recurrent neural network kept fixed.

Hardware Development:

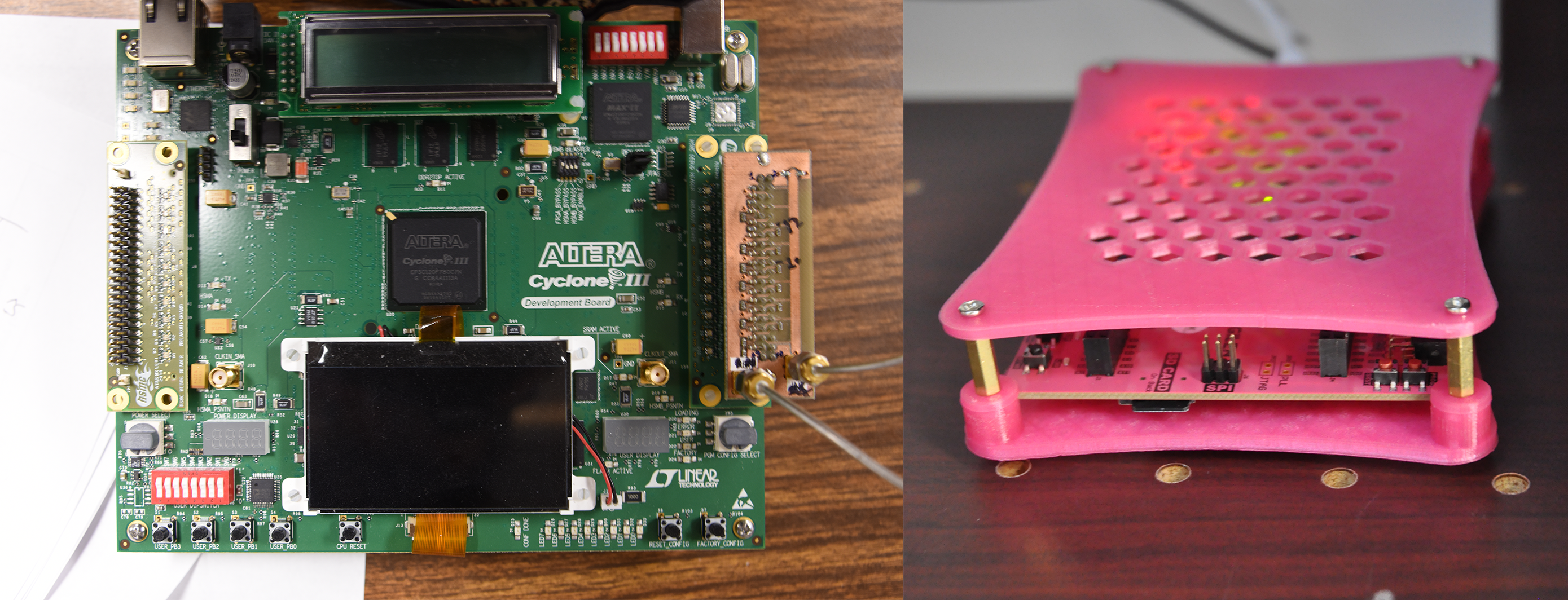

We develop integrated circuits that can perform neural network computations at the hardware level, using Field Programmable Gate Arrays (FPGAs) and Application-Specific Integrated Circuits (ASICs). We study the dynamics of various recurrent neural networks and optimize them for use in machine learning applications. where each gate can be programmed to behave as one of the neurons of the reservoir. Below is a photo of two of the FPGA development boards that we use, which we use to study network structure, data input/output methods, and neuron design.

Applications:

Our work with hardware has primarily focused on Radio frequency signal identification and classification, but our group has also done work on various other machine learning applications:

- Image classification

- Noise and distortion reduction

- Prediction of trajectories in particle accelerators

- Turbulence prediction in magnetohydrodynamic systems

Publications

- I. Shani, L. Shaughnessy, J. Rzasa, A. Restelli, B.R Hunt, H. Komkov and D.P. Lathrop. Dynamics of analog logic-gate networks for machine learning. Chaos. 29 (123130): 1-17 (2019). [DOI] [ADS]

- H. Komkov, A. Restelli, B. Hunt, L. Shaughnessy, I. Shani and D.P. Lathrop. The Recurrent Processing Unit: Hardware for High Speed Machine Learning. arXiv. (2019). [ADS]

- H. Komkov, L. Dovlatyan, A. Perevalov and D.P. Lathrop. Reservoir Computing for Prediction of Beam Evolution in Particle Accelerators. Machine Learning and the Physical Sciences: Workshop at the 33rd Conference on Neural Information Processing Systems (NeurIPS). (2019). [ADS]

Intellectual Property

- Daniel Lathrop, Itamar Shani Peter Megson, Alessandro Restelli, and Anthony Mautino. Integrated circuit designs for reservoir computing and machine learning, UPCT/US2018/032902, May 2018